How to Talk to Your Kids About AI Mistakes and Hallucinations

AI gets things wrong, confidently. Here's how to explain AI hallucinations to your kids and turn AI mistakes into learning moments.

AI makes mistakes. Frequently. The problem isn't that AI is wrong sometimes; everything is wrong sometimes. The problem is that AI is wrong with total confidence. It doesn't hedge, pause, or say "I'm not sure about this." It states fabricated facts in the exact same tone as verified ones. For kids, this is genuinely confusing. Here's how to explain it and how to use AI's mistakes as one of the best teaching tools available.

Why AI Makes Mistakes

Start here when talking to your kid. The explanation matters because it changes how they relate to the tool.

The simple version (ages 5-9):

"AI learned by reading millions and millions of things people wrote. When you ask it a question, it guesses what a good answer looks like based on patterns in everything it read. Most of the time it's pretty good. But sometimes the patterns lead it to say something that sounds right but isn't. It's not trying to trick you. It just doesn't know the difference between true and made-up."

The fuller version (ages 10-14):

"AI doesn't store facts like a database. It generates text by predicting what words should come next, one word at a time. Usually those predictions produce accurate information, because the patterns in its training data reflect real knowledge. But sometimes the predictions produce sentences that are confident and completely wrong. This is called 'hallucination.' It's a known problem with how current AI works, and it's not going to be fixed by the next software update. It's fundamental to how the technology operates."

The key idea for both versions: AI doesn't know what it knows. It has no mechanism for checking its own accuracy. That's your kid's job.

Common Types of AI Mistakes

Walk through these with your kid so they know what to watch for:

Invented facts. AI will state statistics, dates, and details that it generated on the spot. "The Eiffel Tower is 1,063 feet tall" might be right (it is), but "The tallest tree in Central Park is 142 feet" might be completely made up.

Fictional sources. Ask AI to cite a source and it might invent a book, a research paper, or an expert who doesn't exist. It will even provide convincing-sounding titles and author names for things that were never written.

Confident vagueness. AI sometimes gives answers that sound specific but are actually just elaborate paraphrases of the question. "What causes thunder?" might get a response that sounds authoritative but is subtly wrong in the details.

Blended inaccuracy. AI might take real elements and combine them incorrectly. "Marie Curie discovered radium in 1903 while working at the Sorbonne." Each piece sounds plausible, but the dates, locations, and details might be mixed up.

Outdated information. AI's training data has a cutoff. It might not know about recent events, new discoveries, or current statistics.

How to Turn Mistakes Into Learning

Here's where AI's flaws become an asset. Most educational tools try to be perfect. AI's imperfection is actually more useful because it gives kids something to push against.

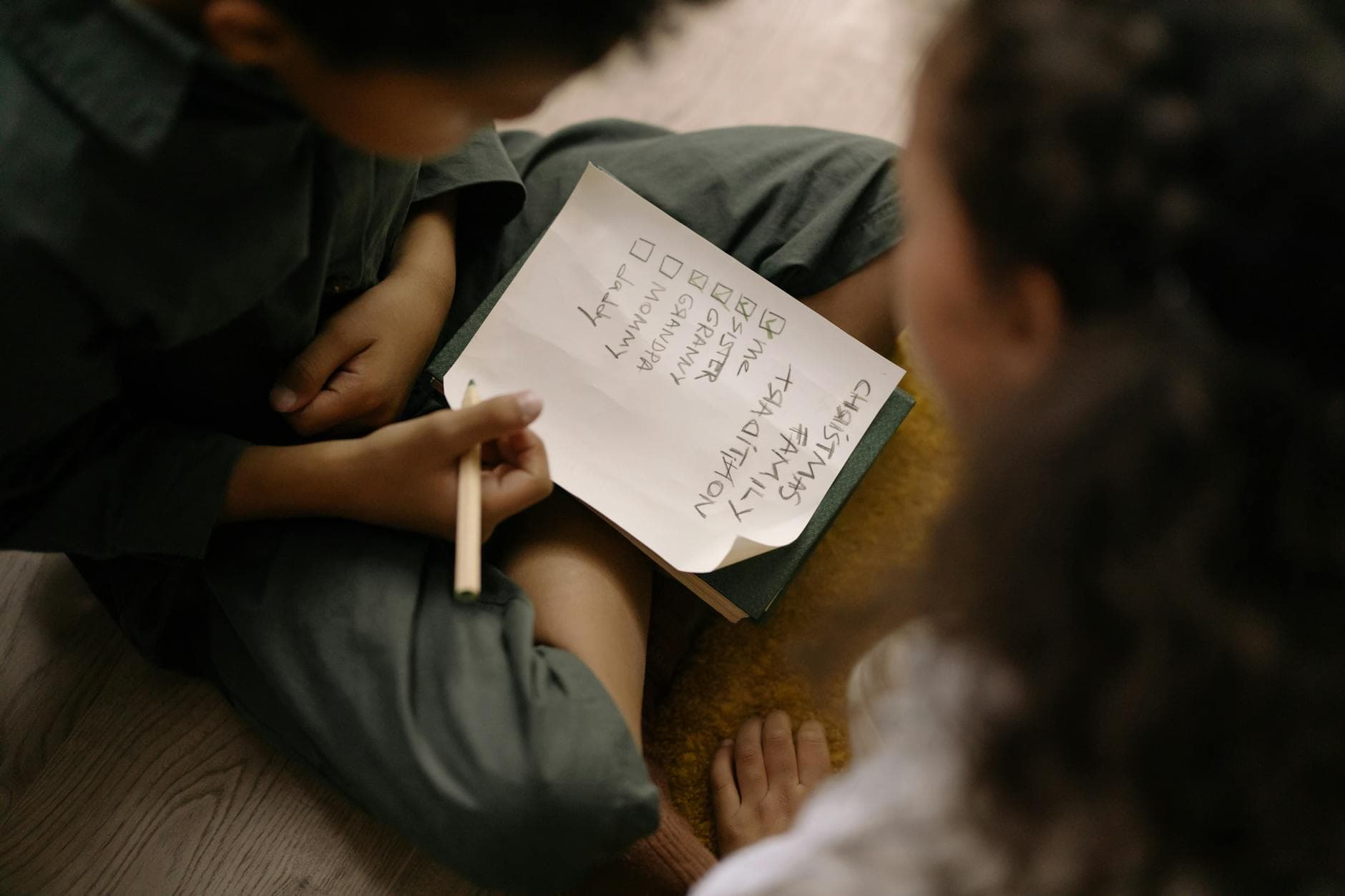

The "Catch AI" Game

Make finding AI mistakes fun. After any AI interaction, challenge your kid: "Can you find something in there that's wrong?" Give them a point for every error they catch. This flips the dynamic from "AI is the authority" to "I'm the quality checker."

The "Why Was It Wrong?" Discussion

When your kid catches an error, don't stop at "that's wrong." Ask: "Why do you think AI said that?" This leads to genuinely interesting conversations:

- "Maybe it mixed up two similar facts."

- "Maybe the stuff it learned from had the wrong information."

- "Maybe it was guessing because it didn't really know."

All of these are reasonable hypotheses, and they demonstrate understanding of how AI works.

The "Fix It" Exercise

When AI produces something with errors, have your kid rewrite the incorrect parts with accurate information. This is active learning: they research the correct answer, compare it to AI's version, and produce a corrected document. The output is better than what AI alone could produce, which reinforces that human + AI is better than AI alone.

What Not to Do

Don't use AI mistakes to scare your kid away from AI. "See, you can't trust it!" is the wrong lesson. The right lesson is "you can use it, but you verify." Fear leads to avoidance. Healthy skepticism leads to competent use.

Don't pretend you always know when AI is wrong. You won't. AI has fooled you too. It's fooled everyone. Being honest about that models good behavior: "I thought that sounded right, but let's check."

Don't only focus on mistakes. If every AI session turns into an error-hunting exercise, it stops being fun. Balance fact-checking with the creative and productive parts of AI use. Fact-checking is a habit that runs in the background, not the main event.

Age-Appropriate Responses to Common AI Errors

When your kid encounters an AI mistake, how you respond matters. Here's what works at each age:

Ages 5-7: "Oops, AI got that one wrong! Good thing we're checking. Let's find the real answer." Keep it light. No big deal. Just part of how we use the tool.

Ages 8-10: "Interesting, that doesn't seem right. How should we verify it?" Let them take the lead on checking. Guide them toward reliable sources but let them do the work.

Ages 11-14: "AI hallucinated there. What tipped you off? How would you have caught it if you weren't looking for it?" Push toward meta-cognition: understanding their own process for detecting errors.

The Bigger Picture

Teaching your kid about AI mistakes isn't just about AI. It's about building a human who questions confident claims, checks sources, and doesn't accept information at face value, whether from AI, the internet, the news, or anyone. AI just makes it easy to practice because it's confidently wrong often enough to provide regular opportunities.

A child who grows up catching AI's mistakes grows into an adult who catches misinformation everywhere. That's the real skill here.

Start This Week

Next time you use AI with your kid, intentionally look for an error together. You'll find one. Then talk about it. That one conversation does more for your child's critical thinking than a week of worksheets.

For activities that build this skill into every session, Big Thinkers designs each activity so your child evaluates, questions, and improves on AI's output, not just accepts it.

Everything parents need to know about AI safety for kids: real risks, age-appropriate boundaries, practical tools, and how to build safe AI habits as a family.